For example, when I showed the picture below to my Taiwanese coworker he immediately said that these were multiple instance of Chinese "one".

Here are 4 of those images close-up. Classical OCR approaches, have trouble with these characters.

This is a common problem for high-noise domain like camera pictures and digital text rasterized at low resolution. Some results suggest that techniques from Machine Vision can help.

For low-noise domains like Google Books and broken PDF indexing, shortcomings of traditional OCR systems are due to

1) Large number of classes (100k letters in Unicode 6.0)

2) Non-trivial variation within classes

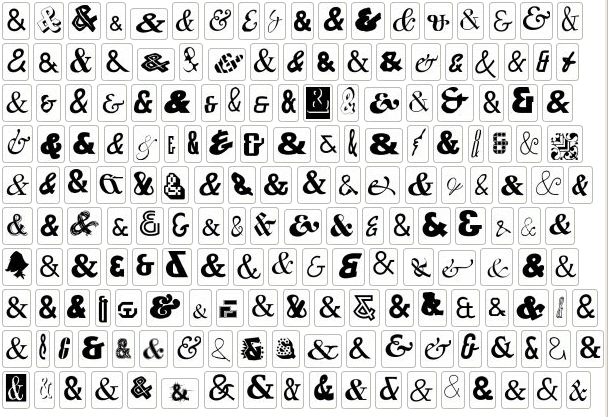

Example of "non-trivial variation"

I found over 100k distinct instances of digital letter 'A' from just one day's crawl worth of documents from the web. Some more examples are here

Chances are that the ideas for human-level classifier are out there. They just haven't been implemented and tested in realistic conditions. We need someone with ML/Vision background to come to Google and implement a great character classifier.

You'd have a large impact if your ideas become part of Tesseract. Through books alone, your code will be run on books from 42 libraries. And since Tesseract is open-source, you'd be contributing to the main OCR effort in the open-source community.

You will get a ton of data, resources and smart people around you. It's a very low bureocracy place. You could run Matlab code on 10k cores if you really wanted, and I know someone who has launched 200k core jobs for a personal project. The infrastructure also makes things easier. Google's MapReduce can sort a petabyte of data (10 trillion strings) with 8000 machines in just 30 mins. Some of the work in our team used features coming from distributed deep belief infrastructure.

In order to get an internship position, you must pass general technical screen that I have no control of. If you are interested in more details, you could contact me directly.

Link to apply is here

289 comments:

1 – 200 of 289 Newer› Newest»Seems a backwards approach to me: you are ignoring context. Even with the example of Chinese 1s, the observer picks up a few obvious cases and then can conclude that the rest are variants. Context means, for example, that in a string of characters likely to be a word, when non-letters are embedded in the string, they usually need to be replaced by letters that make the string make sense as a word. The sense comes from a dictionary at one level, and from the sentence the word is in at the next level making grammatical sense.

There is also the challenge, which I heard a librarian express as a dream, of 3D imaging of books at sufficient resolution and contrast to machine read them without opening them and turning the pages. Soft x-ray microbeams (for scatter reduction) might work here, using computed tomography combined with compressive sensing, especially given the binary nature of most text (black ink on white paper). This would speed scanning of books by orders of magnitude, and eliminate the hard to old, brittle books.

Yours, -Dick Gordon gordonr@cc.umanitoba.ca

“eliminate the hard” should be “eliminate the harm”

Context is important too. Google Translate Group is working on that.

Better work with them, then. OCR one character at a time seems to me a nonstarter. What you can do is loop as follows:

1. recognize a word

2. recognize its characters

3. find best match in dictionary

4. construct an image in the font of the whole word and alternative words

5. find best match of the whole image of the word to the constructed images

Repeat above at sentence level.

Anyone at Google scanning closed books? Thanks.

Yours, -Dick

Nice information thank you so much

Deep Learning Training in Hyderabad

One of the finest thing to do in theinternship world is doing internship in google as they will peak one of the intern after the course. site that is very helpful for the academic papers writing.

Good Post! Thank you so much for sharing this Machine learning post, it was so good to read and useful to improve my knowledge as updated one, keep blogging

Machine Learning Training in Chennai | best machine learning institute in chennai | Machine Learning course in chennai

Thanks for sharing such an informative post....

Real Trainings provide all IT-Training Course information in Hyderabad, Bangalore, Chennai . Here students can Compare all Courses with all detailed information. In BizTalk Server Training we provide courses like BizTalk Server Training, BizTalk Server online Training etc...

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

ppc services in hyderabad

digital marketing companies in hyderabad

pay per click services in india

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

website designing Internships in hyderabad

best website designing companies in hyderabad

website designer in Hyderabad

web designing company in Hyderabad

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

best seo company in hyderabad

best seo services in hyderabad

digital marketing companies in india

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

ppc services in hyderabad

digital marketing companies in hyderabad

pay per click services in india

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

php internships in hyderabad

best php development companies in hyderabad

php application development

php web application development company india

software testing internships in Hyderabad

Java Internships in hyderabad

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

php internships in hyderabad

best php development companies in hyderabad

php application development

php web application development company india

software testing internships in Hyderabad

Java Internships in hyderabad

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

internship program

computer science internships

internship program in it company

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

ppc services in hyderabad

seo company in hyderabad

seo companies in hyderabad

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

best ios development companies in hyderabad

web designing company in Hyderabad

website designer in Hyderabad,

top mobile app development companies

ios mobile app development company

best java development companies in hyderabad

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

php internships in hyderabad

best php development companies in hyderabad

php application development

php web application development company india

student internships

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

wordpress development hyderabad

digital marketing companies in hyderabad

pay per click services in india

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

student internships

engineering internships in hyderabad

internship courses in hyderabad

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

top android developers

top mobile app development companies

ios mobile app development company

student internships

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

it internships in Hyderabad

summer internships in hyderabad

Student Internships in Hyderabad

dinternship for marketing studentsd

free internship programs

internship program

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

it internships in Hyderabad

summer internships in hyderabad

Student Internships in Hyderabad

dinternship for marketing studentsd

free internship programs

internship program

Currently Python is the most popular Language in IT. Python adopted as a language of choice for almost all the domain in IT including Web Development, Cloud Computing (AWS, OpenStack, VMware, Google Cloud, etc.. ),Read More

myTectra the Market Leader in Artificial intelligence training in Bangalore

myTectra offers Artificial intelligence training in Bangalore using Class Room. myTectra offers Live Online Design Patterns Training Globally.Read More

myTectra the Market Leader in Machine Learning Training in Bangalore myTectra offers Machine Learning Training in Bangalore using Class Room. myTectra offers Live Online Machine Learning Training Globally. Read More

Such a great blog. Got many useful inforamations about this thechnology.

Very useful for the freshers. Good keep it up.

machine learning course

machine learning certification

machine learning training

machine learning training course

Hi, I really appreciate your work and every time I updated from your blog. Such a useful blog. Anyone looking for Digital Marketing Services in Hyderabad ? Thanks for your kind information.

This information is impressive; I am inspired by your post writing style & how continuously you describe this topic. Career3S is one best side for post your vacancy and you will get the best resources. Visit our page Employer .

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

internships in hyderabad for bba students

internships for freshers in hyderabad

internships in gachibowli for it students

internship for engineering students in gachibowli

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

digital marketing internships in hyderabad

internship in hyderabad for cse

internships in hyderabad for cse students

internship program in banjarahills

student internships in banjarahills

summer internships in madhapur

nice post and please provide more information.thanks for sharing

educational software companies

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

internships for freshers in hyderabad

internships in hyderabad for bba students

internships for freshers in hyderabad

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

paid summer internships in kurnool

internships in kurnool for bba students

java internship in guntur

paid internships in Guntur

internships for freshers in vijayawada

project internships in vijayawada

Useful Information, your blog is sharing unique information....

Thanks for sharing!!!

internships for freshers in hyderabad

internships in hyderabad for bba students

internships for freshers in hyderabad

Thank you for sharing the inforamtion.valuable information and content dispalyed

digital marketing internships in hyderabad

student internships in banjarahills

it internships in madhapur

internship program in banjarahills

Nice Post thanks for sharing. thanks

best regards

Zenoxx Knowledge

IT Corporate Training in India

Corporate Training Companies In India

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

digital marketinginternship in vijayawada

companies offering internship in Vijaywada

internships in warangal for cse students

internship providing companies in Warangal

internship in kothagudem for cse

paid summer internships in kothagudem

Hi Your Blog is very nice!!

As The Leading Web Development Company in Hyderabad, Spark Infosys provides PHP Training, SEO Training, Web Designing Training. We provide Individual Training as well as Corporate Training etc..

Nice post, impressive. It’s quite different from other posts. Thanks to share valuable post.

educational software companies

Really an awesome blog for this technology. Must read it and personally it is very useful

for me. Thanks for posting this blog and it is very innovative one.

corporate training in chennai

corporate training course in chennai

ielts coaching in chennai

machine learning course in chennai

oracle training in chennai

corporate training in t.nagar

corporate training in omr

Digital marketing training in ChennaiThe Professional Certification is a comprehensive Digital Marketing course aimed to provide Marketing Professionals, Job-seekers, Business Owners, Students, and Home-makers with an indepth understanding of Digital Marketing.

This blog is good information

Website Design Company in Bangalore | Best Web Design Company in Bangalore | Website Designing in Bangalore

I am really thankful to the admin for sharing such a lucrative and useful blog with us. Most of the magnates and companies are using IT services for the business profit. It can enhance the business area as well.

SEO Company in Lucknow | IT Company in Lucknow

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

summer internships in madhapur

student internships in banjarahills

internship opportunities in banjarahills

apply for internship in kphb

internship companies in kphb

internship training in kukatpally

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

summer internships in madhapur

student internships in banjarahills

internship opportunities in banjarahills

apply for internship in kphb

internship companies in kphb

internship training in kukatpally

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

summer internships in madhapur

student internships in banjarahills

internship opportunities in banjarahills

apply for internship in kphb

internship companies in kphb

internship training in kukatpally

Thanks for sharing this info. Keep sharing Digital Marketing Tips!

Digital Marketing Blog

After completing the course of digital marketing than you have to do an internship.igital marketing is constantly changing field so you have to keep yourself updated and constantly work with the latest change. This is all you get to learn in an internship. various reasons which show the importance of internship.

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

php internships in hyderabad

software testing internships in Hyderabad

Java Internships in hyderabad

best website designing companies in hyderabad

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

best ios development companies in hyderabad

e-commerce site development company in hyderabad

best software testing companies in hyderabad

website designer in Hyderabad

Good resources. Thanks Java Tutorials | Learn Java

Thanks for Sharing a Very Informative Information & it’s really helpful for us

Corporate Training in Delhi

Corporate Training in Noida

Corporate Training in Gurgoan

Its a wonderful post and very helpful, thanks for all this information. You are including better information.

Summer Training in Noida

Summer Training Course in Noida

Summer Training institute in Noida

Great initiative by your company in corporate industry which is really helpful for us. For best services of corporate training in Delhi, Noida & Gurgaon with Placement call us at -+91-9311002620 or visit our website -https://www.htsindia.com/our-services/corporate-training .

well said! This content is the right way to enhance your knowledge and I like it this post. I want more new updates and keep posting...!

Tableau Training in Chennai

Tableau Course in Chennai

Spark Training in Chennai

Oracle Training in Chennai

Oracle DBA Training in Chennai

Social Media Marketing Courses in Chennai

Tableau Training in Chennai

Tableau Course in Chennai

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

software testing internships in Hyderabad

digital marketing internships in hyderabad

engineering internships in hyderabad

best website designing companies in hyderabad

Thank you for the post.I really enjoyed reading your article.

Data Science Training in Hyderabad

Hadoop Training in Hyderabad

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

php internships in hyderabad

best php development companies in hyderabad

engineering internships in hyderabad

best website designing companies in hyderabad

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

software testing internships in Hyderabad

digital marketing internships in hyderabad

engineering internships in hyderabad

best website designing companies in hyderabad

Amazing blog with the latest information. Your blog helps me to improve myself in many ways. Looking forward for more like this.

Data Science Course in Chennai

Data Science Training in Chennai

Data Science Training in Anna Nagar

Machine Learning Course in Chennai

Machine Learning Training in Chennai

RPA Training in Chennai

Robotics Process Automation Training in Chennai

Data Science Course in Chennai

Data Science Training in Chennai

Paglaum ikaw makabaton og mas makaiikag ug makapaikag nga mga artikulo. Daghang salamat

lưới chống chuột

cửa lưới dạng xếp

cửa lưới tự cuốn

cửa lưới chống muỗi hà nội

Thanks for providing a useful article containing valuable information. start learning the best online software courses.

Workday HCM Online Training

Good article with the very informative content and here Maxwell Global Software is one of the best web development company in hyderabad

India, having an expertise group of web designers with latest web skills who can give the best solution to your business.

Thank you for your post. This is excellent information. It is amazing and wonderful to visit your site.

best Digital marketing services in kukatpally

top Digital marketing agencies in kphb

digital marketing company in gachibowli

list of Digital marketing companies

天啊!这是一篇很棒的文章。谢谢分享!

Cửa tiệm bán cửa lưới chống muỗi TP Cần Thơ

Cô gái hé lộ điểm bán cửa lưới chống muỗi tại Hà Đông tốt

Cô gái trẻ hé lộ cửa hàng cửa lưới chống muỗi tại TP Đà Nẵng

Cô gái tiết lộ điểm bán cửa lưới chống muỗi thị xã Sơn Tây tốt nhất

SKARtec Digital Marketing Academy is an institute dedicated to meet the integrated marketing needs of the industry. Our Digital Marketing program is ideal for those, who wish to manage a successful and sustainable digital marketing strategy.

Our digital marketing course in Chennai is targeted at those who are desirous of taking advantage of career opportunities in digital marketing. Join the very best Digital Marketing Course in Chennai. Get trained by an expert who will enrich you with the latest digital trends.

This digital marketing certification explores all the core digital marketing and management concepts, techniques and disciplines from planning, implementation and measurement to success and failure factors. Enrolling in this marketing course will prepare you to join an exclusive community of highly-recognized digital marketing experts.

digital marketing course in chennai

digital marketing training in chennai

Ճանաչելով ձեր հոդվածը շատ լավ է: Շատ շնորհակալ եմ հետաքրքիր հոդվածով կիսվելու համար

giảo cổ lam 5 lá

giảo cổ lam 7 lá

giảo cổ lam khô

giảo cổ lam 9 lá

Có lẽ cần phải trải qua tuổi thanh xuân mới có thể hiểu được( bài tập toán cho bé chuẩn bị vào lớp 1 ) tuổi xuân là khoảng thời gian ta( dạy trẻ học toán tư duy ) sống ích kỷ biết chừng nào. Có lúc nghĩ, sở dĩ tình yêu cần phải đi một vòng tròn lớn như vậy, phải trả một cái giá quá đắt như thế,( phương pháp dạy toán cho trẻ mầm non ) là bởi vì nó đến không đúng thời điểm. Khi có được( Toán mầm non ) tình yêu, chúng ta thiếu đi trí tuệ. Đợi đến khi( Bé học đếm số ) có đủ trí tuệ, chúng ta đã không còn sức lực để yêu một tình yêu thuần khiết nữa.

Attend The Python Training in Bangalore From ExcelR. Practical Python Training in Bangalore Sessions With Assured Placement Support From Experienced Faculty. ExcelR Offers The Python Training in Bangalore.

Good info.

Freshpani is providing online water delivery service currently in BTM, Bangalore you can find more details at Freshpani.com

Online Water Delivery | Bangalore Drinking Water Home Delivery Service | Packaged Drinking Water | Bottled Water Supplier

Thanks for the article. Winskje jo binne altyd lokkich en suksesfol

giảo cổ lam giảm cân

giảo cổ lam giảm béo

giảo cổ lam giá bao nhiêu

giảo cổ lam ở đâu tốt nhất

this post are edifying in Classified Submission Site List India . An obligation of appreciation is all together for sharing this summary, Actually I found on different regions and after that proceeded this site so I found this is unfathomably improved and related.

https://myseokhazana.com/

Extraordinary Article! I truly acknowledge this.You are so wonderful! This issue has and still is so significant and you have tended to it so Informative.

Contact us :- https://myseokhazana.com

Your article is very interesting. Also visit our website at:

web design company in chennai

web designing company in chennai

This is the most predictable blog which I have ever seen. I should need to express, this post will help me a ton to help my orchestrating on the SERP. Much restoring for sharing.

https://myseokhazana.com

THANKS FOR THE INFORMATION....

Digital Marketing Internship Program in BangaloreDigital Marketing Internship Program in Bangalore

THANKS FOR THE INFORMATION....

Digital Marketing Internship Program in BangaloreDigital Marketing Internship Program in Bangalore

Excellent machine learning blog,thanks for sharing...

Seo Internship in Bangalore

Smo Internship in Bangalore

Digital Marketing Internship Program in Bangalore

Thank you for your post. This is useful information.

Here we provide our special one's.

creative website designing services

top Mobile App Development Companies

list of Digital marketing companies

thankyou ,your blog gives more valueable information.Digital marketing institute in Chennai

Hi, probably our entry may be off topic but anyways, I have been surfing around your blog and it looks very professional. It’s obvious you know your topic and you appear fervent about it. I’m developing a fresh blog plus I’m struggling to make it look good, as well as offer the best quality content. I have learned much at your web site and also I anticipate alot more articles and will be coming back soon. Thanks you.

educational software companies

Những gì bạn chia sẻ tôi rất thích

Bán chó Bull Pháp mầu lilac giữa tháng 5 năm 2019

Tiệm bán chó phốc sóc giá rẻ tốt nhất

Bán chó Poodle đầu tháng 5 năm 2019

Công ty mua bán mèo cảnh tốt

Chó tuyết Alaska giá bao nhiêu?

hihi

bồn ngâm massage chân

bon mat xa

chậu ngâm chân giá rẻ

bồn mát xa

ok nice

Thực đơn giảm cân tốt và hiệu quả

Mua lều xông hơi hồng ngoại tại Phú Thọ

Giảm cân nhanh trong 1 tuần

Xông mặt trị mụn đúng cách

Lều xông hơi loại nào tốt để giảm cân

Nice blog Thank you very much for the information you shared.

Digital Marketing Internship In Bangalore

Seo Internship In Bangalore

Internship Programs in Bangalore

Nice blog Thank you.

Seo Internship In Bangalore

Internship Programs in Bangalore

Digital Marketing Internship In Bangalore

Thanks for your information, you have such a great knowledge on this criteria. It’s really help full to me. digital marketing Vijayawada

Nice info and great share. Also know some earning online ideas

Thanks for sharing this valubale information with us Keep Blogging !!

Digital Marketing Course in Vizag

Seo Training in vizag

Seo services in vizag

Digital Marketing Course in vijayawada

Digital Marketing Course in Guntur

Digital Marketing Course in Tirupati

Balloon Decoration in Vizag

said..

Thanks for sharing such an informative post https://socialprachar.com/artificial-intelligence-course-training-hyderabad/?ref=blog%20comemt/brahmasree

nice post Artificial Intelligence training institutes in kphb

nice post Artifical Intelligence training institutes in kphb

nice post

Such an informative article it is. Hope to see posts like this

data science courses in kukatpally

nice information

best information The Best Artificial Intelligence Training institute in Hyderabad

Very good information, its very usefull keep posting.

https://socialprachar.com/digital-marketing-course-training-institute-hyderabad/

very helpful

Data science with python course training Hyderabad

your blog is very helpful to me thank you.

Data science with python course training Hyderabad

Very good information

data science institute in kukatpally

nice post

nice post

Thanks for sharing a valuable information...keep sharing

Digital Marketing Training In Hyderabad

Very interesting blog Awesome post. your article is really informative and helpful for me and other bloggers too

seo training in hyderabad

smm training in hyderabad

sem training in hyderabad

digital marketing training in hyderabad

Những chia sẻ của bạn quá hay

máy khuếch tán tinh dầu giá rẻ

máy phun tinh dầu

máy khuếch tán tinh dầu tphcm

máy phun sương tinh dầu

nice

Very handy blog keep blogging. Take admission in the top data science training in Gurgaon

Great work.

spark interview questions

ok nice

máy tạo hương thơm trong phòng

máy xông tinh dầu bằng điện tphcm

máy xông hương

may xong huong tinh dau

máy đốt tinh dầu điện

Artificial Intelligence course is on demand and most adorable technology for the fresh graduates as well as professionals who are willing to Kick-start their career in robotic /artificial world. Artificial intelligence is a process of the computers or robots can perform tasks intelligently by using Machine Learning, Computer Vision, Natural Language Processing and Deep Learning techniques.

Artificial intelligence (AI) is a new factor of production and has the potential to introduce new sources of growth, changing how work is done and reinforcing the role of people to drive growth in business.

Accenture research on the impact of AI in 12 developed economies reveals that AI could double annual economic growth rates in 2035 by changing the nature of work and creating a new relationship between man and machine. The impact of AI technologies on business is projected to increase labor productivity by up to 45 percent and enable people to make more

efficient use of their time.

Social Prachar is the Top rated Artificial Intelligence training institute in Bangalore. We provide Artificial Intelligence Course in Bangalore with real time trainers and live projects. We train students from basics to advanced concepts with real-time client scenarios and case studies. Our AI Course training makes you strong in Artificial Intelligence areas and gives you a new height to the future. We provide excellent platform to the students to learn Advanced technologies and explore the Subject from Industry experts with our Artificial Intelligence Master Program.

Visit :- https://socialprachar.com/artificial-intelligence-training-in-bengaluru/

Wow, great post! I just want to leave my super-thoughtful comment here for you to read. I've made it thought-provoking and insightful, and also questioned some to the points you made in your blog post to keep you on your toes...

Thanks...

SEO Company in Dubai

SEO Company in Mumbai

Website Development Company in Meerut

thanks for sharing such an important and usefull stuff..

Digital marketing Course in Vishakapatnam

Hy vọng thời gian tới bạn sẽ có nhiều bài viết ok hơn

case máy tính cũ

vga cũ hà nội

mua bán máy tính cũ hà nội

Lắp đặt phòng net trọn gói

Its help me to improve my knowledge and skills also.im really satisfied in this sap hr session.SAP ewm Training in Bangalore

Wow it is really wonderful and awesome thus it is veWow, it is really wonderful and awesome thus it is very much useful for me to understand many concepts and helped me a lot.SAP crm Training in Bangalore

This is the exact information I am been searching for, Thanks for sharing the required infos with the clear update and required points. To appreciate this I like to share some useful information.SAP pm Training in Bangalore

It is very good and useful for students and developer.Learned a lot of new things from your post Good creation,thanks for give a good information at sap crm.SAP scm Training in Bangalore

Thank you for valuable information.I am privilaged to read this post.sap hybris Training in Bangalore

I really enjoy reading this article.Hope that you would do great in upcoming time.A perfect post.Thanks for sharing.sap mm Training in Bangalore

Thanks For sharing a nice post about sap abap Training Course.It is very helpful and sap abap useful for us.sap hr Training in Bangalore

It has been great for me to read such great information about sap wm.sap bw Training in Bangalore

Excellent information with unique content and it is very useful to know about the information.sap s4 Training in Bangalore

I think there is a need to look for some more information and resources about Informatica to study more about its crucial aspects.sap ehs Training in Bangalore

It is really explainable very well and i got more information from your site.Very much useful for me to understand many concepts and helped me a lot.sap bpc Training in Bangalore

Congratulations! This is the great things. Thanks to giving the time to share such a nice information.sap bods Training in Bangalore

The Information which you provided is very much useful for Agile Training Learners. Thank You for Sharing Valuable Information.sap abap Training in Bangalore

Excellent post for the people who really need information for this technology.sap fico Training in Bangalore

Very useful and information content has been shared out here, Thanks for sharing it.sap hana Training in Bangalore

Awesome post with lots of data and I have bookmarked this page for my reference. Share more ideas frequently.sap fiori Training in Bangalore

Excellent post with valuable content. It is very helpful for me and a good post.sap testing Training in Bangalore

Thank you for the most informative article from you to benefit people like me.sap gts Training in Bangalore

The content was very interesting, I like this post. Your explanation way is very attractive and very clear.sap apo Training in Bangalore

We have the best and the most convenient answer to enhance your productivity by solving every issue you face with the software.sap security Training in Bangalore

Took me time to read all the comments, but I really enjoyed the article.sap wm Training in Bangalore

Your post is so clear and informative. I feel good to be here reading your superb work.sap ps Training in Bangalore

I have recently visited your blog profile. I am totally impressed by your blogging skills and knowledge.sap ehs Training in Bangalore

Thanks for sharing it with us. I am very glad that I spent my valuable time in reading this post.devops Training in Bangalore

I know that it takes a lot of effort and hard work to write such an informative content like this.Amazon web services Training in Bangalore

Thank you so much for sharing. Keep updating your blog. It will very useful to the many users. seo services in kolkata | seo company in kolkata | seo service provider in kolkata | seo companies in kolkata | seo expert in kolkata | seo service in kolkata | seo company in india | best seo services in kolkata | digital marketing company in kolkata | website design company in kolkata

Nice Blog

Winter Internship

Nice Blog

Winter Internship for Engineering Students

Nice Blog. Thanks for sharing valuable piece with us. Keep posting.....!!!

Winter Training for Computer Science

Thanks for sharing this blog. Its written very nicely.

Benefits of Winter Internship

hey – great content you have. Please make sure you check out my digital marketing website https://todayhired.com/ – I have a lot of information about this and it definitely might help.

Hello, o you know that the best way to boost your brain is

visiting or contacting us

https://weiiitrading.com/our-products/moonrock-carts/buy-empty-moonrock-clear-vape-cartridges-blue-carts-dr-zodiak-atomizers-with-flavor-box-packaging/

https://weiiitrading.com/our-products/heavy-hitters-carts/buy-wholesale-new-heavy-hitter-vape-cartridges-1-0ml-ceramic-coil-empty-tank-carts-510-thread-thick-oil-atomizer/

https://weiiitrading.com/our-products/juul-carts/buy-hot-empty-ceramic-pod-disassembled-cartridges-0-7ml-1-0ml-vape-pod-carts-for-vape-juul-vape-pen-start-kit-top-quality/

https://weiiitrading.com/our-products/mario-carts/buy-peaches-and-dream/

https://weiiitrading.com/our-products/heavy-hitters-carts/buy-bubba-kush-cartridge-2-2g/

https://weiiitrading.com/our-products/mario-carts/buy-thin-mint-cookies/

Pila Brass Knuckles Online for sale online,

where to buy Buy Pila Brass Knuckles Online

buy valley online cartridge

buy space candy online

buy cannabis syrup online

buy botox online

cannabis bread

uk chese

47 dank vapewhite fire og

buy moonrock

Email Us

Contact: +1 619-537-6734

Nice information thank you so much..

Web Design and Development Bangalore | Web Designing Company In Bangalore | Web Development Company Bangalore | Web Design Company Bangalore

Free internship in chennai

Free Internship in Chennai : CodeBind Technologies offer Free Internship in Chennai for CSE, IT, ECE, EEE, ICE, EIE, Biomedical, CIVIL, Mechanical Students.

to get more - https://codebindtechnologies.com/free-internship-in-chennai/

your article on digital marketing is very interesting thank you so much.

digital marketing training in hyderabad

digital marketing course in hyderabad

digital marketing coaching in hyderabad

digital marketing training institute in hyderabad

digital marketing institute in hyderabad

This is an informative post and it is very useful and knowledgeable. therefore, I would like to thank you for the efforts you have made in writing this article.

Website Design and Development Company

Website Design Company

Website Development Company

Wordpress Customization comapany

SEO Company

digital marketing company

This is really useful and informative blog Best IELTS Online Training it is worthy to go through this blog ,thank you

This is really useful and informative blog Best IELTS Online Training it is worthy to go through this blog ,thank you

Thanks for the information. Nice blog. We build web design & development that give you the operational efficiency and independence to portray your product in the best manner.

Nice blog.Thanks for the useful information Get Internship in the USA

This is good information and really helpful for the people who need information about this.

Summer Training in Delhi

Summer Training institute in Delhi

Summer internship Programs

There was a good pace in the course, the teacher could present difficult topics in an

understandable way, omitting confusing details.

<a href="https://www.analyticspath.com/machine-learning-training-in-hyderabad>Machine Learning Course In Hyderabad </a>

Thanks for sharing,got lot of useful information.Keep Updating more.If one want to learn depth Data science training institute in btm layout is the best course to start with.

Really nice way to present your blog and information is also too good. Thanks for sharing it. If you are searching for more courses than visit here:-

Social Media Marketing course

android application development

big data analytics courses

certified ethical hacker certification

open school

python class

web design classes

mobile app development training

internships near me

cyber security internships

Awesome, nice share remind me of thefullformof GK thing.

nice information bro because you im developed the website now its leading best clients and providing services like digital marketing web designing

I am very thankful for such a wonderful article.

It's very interesting to read and easy to understand. Thanks for sharing.

We also provide the best yahoo customer support services.

yahoo customer service phone number

Thank you for taking the time to provide us with your valuable information. We strive to provide our candidates with excellent care

http://chennaitraining.in/creo-training-in-chennai/

http://chennaitraining.in/building-estimation-and-costing-training-in-chennai/

http://chennaitraining.in/machine-learning-training-in-chennai/

http://chennaitraining.in/data-science-training-in-chennai/

http://chennaitraining.in/rpa-training-in-chennai/

http://chennaitraining.in/blueprism-training-in-chennai/

Needed to compose you a very little word to thank you yet again regarding the nice suggestions you’ve contributed here.

Machine Learning Training In Hyderabad

“Amazing write-up!”

SUN International Institute for Technology and Management. To get more details visit

business management programs in visakhapatnam

This article is one of a kind, so helpful.

Top machine vision inspection system companies

Very helpful Post, keep growing

Website Development Company In Saharanpur

I just want to thank you for posting this content I really find it useful. Please keep me posted for more updates. Artificial intelligence training course in noida

I simply want to tell you that I am new to weblog and definitely liked this blog site. Machine learning course

Appsinvo is a Top Mobile App Development Company. We are a passionate team of developers, designers, and analysts who have years of experience in transforming your ideas into innovative and unique software solutions.

Mobile App development company in Asia

Top Mobile App Development Company

Top Mobile App Development Company in India

Top Mobile App Development Company in Noida

Mobile App Development Company in Delhi

Top Mobile App Development Companies in Australia

Top Mobile App Development Company in Qatar

Top Mobile App Development Company in kuwait

Top Mobile App Development Companies in Sydney

Mobile App Development Company in Europe

Mobile App Development Company in Dubai

Hola! I've been following your weblog for a while now and finally got the courage to go ahead and give you a shout out from Dallas Texas! Just wanted to mention keep up the great work! data science course

Great Information sharing .. I am very happy to read this article .. thanks for giving us go through info. Fantastic nice. I appreciate this post. Tableau training in noida

It is actually a great and helpful piece of information about Java. I am satisfied that you simply shared this helpful information with us. Please stay us informed like this. Thanks for sharing.

Java training in chennai | Java training in annanagar | Java training in omr | Java training in porur | Java training in tambaram | Java training in velachery

Thanks for sharing.,

Machine Learning training in Pallikranai Chennai

Pytorch training in Pallikaranai chennai

Data science training in Pallikaranai

Python Training in Pallikaranai chennai

Deep learning with Pytorch training in Pallikaranai chennai

Bigdata training in Pallikaranai chennai

Mongodb Nosql training in Pallikaranai chennai

Spark with ML training in Pallikaranai chennai

Data science Python training in Pallikaranai

Bigdata Spark training in Pallikaranai chennai

Sql for data science training in Pallikaranai chennai

Sql for data analytics training in Pallikaranai chennai

Sql with ML training in Pallikaranai chennai

Good one! Thanks for sharing. By the way What's the benifit of investing in funds over the individual stocks and bonds?

IRCTC shares

CSB Bank

Reserve Bank of India

hey if u looking best Current affair in hindi but before that what is current affair ? any thing that is coming about in earth political events. things that act on the meeting, if need this visit our website and get best Current affair in hindi

Brandstory is one of the best rated seo company in dubai, UAE. We are the leading seo services company in dubai. Hire Brandstory seo agency in dubai to increase your website visibility in search engines. Enhance your website ranking in google and other search engines with brandstory seo services in dubai. We are top seo companies in dubai have more happy seo clients

I had seen your post it is intresting and helpful. Thanks for your post, please visit our blog Beauty Blog

Nice blog very helpful your site please visit our blog "Beauty Blog"

thankyou so much for sharing your thoughts. visit our blog too Beauty Blog

This Blog has Very useful information. Worth visiting. Thanks to you .keep sharing this type of informative articles with us.| Animation institute In Hyderabad

Thanks for sharing such information. This is really helpful for me. You can also visit blog. Blogs for Women

machine learning hint from you was useful to me.....

acte reviews

acte velachery reviews

acte tambaram reviews

acte anna nagar reviews

acte porur reviews

acte omr reviews

acte chennai reviews

acte student reviews

nice thanks for sharing.....................................!

Active Directory online training

Active Directory training

Appian BPM online training

Appian BPM training

arcsight online training

arcsight training

Build and Release online training

Build and Release training

Dell Bhoomi online training

Dell Bhoomi training

Dot Net online training

Dot Net training

ETL Testing online training

ETL Testing training

Hadoop online training

Hadoop training

Tibco online training

Tibco training

Tibco spotfire online training

This article is amazing.Thanks for sharing.Visit our blog too Work From Home Jobs For Women

The development of artificial intelligence (AI) has propelled more programming architects, information scientists, and different experts to investigate the plausibility of a vocation in machine learning. Notwithstanding, a few newcomers will in general spotlight a lot on hypothesis and insufficient on commonsense application. machine learning projects for final year In case you will succeed, you have to begin building machine learning projects in the near future.

Projects assist you with improving your applied ML skills rapidly while allowing you to investigate an intriguing point. Furthermore, you can include projects into your portfolio, making it simpler to get a vocation, discover cool profession openings, and Final Year Project Centers in Chennai even arrange a more significant compensation.

Data analytics is the study of dissecting crude data so as to make decisions about that data. Data analytics advances and procedures are generally utilized in business ventures to empower associations to settle on progressively Python Training in Chennai educated business choices. In the present worldwide commercial center, it isn't sufficient to assemble data and do the math; you should realize how to apply that data to genuine situations such that will affect conduct. In the program you will initially gain proficiency with the specialized skills, including R and Python dialects most usually utilized in data analytics programming and usage; Python Training in Chennai at that point center around the commonsense application, in view of genuine business issues in a scope of industry segments, for example, wellbeing, promoting and account.

The Nodejs Training Angular Training covers a wide range of topics including Components, Angular Directives, Angular Services, Pipes, security fundamentals, Routing, and Angular programmability. The new Angular TRaining will lay the foundation you need to specialise in Single Page Application developer. Angular Training

Nice article , Thanks for sharing and please also visit our blog Jobs for Pregnant Women

Thanks for sharing such information. This is really helpful for me. You can also visit blog. Jobs for Pregnant Women

nice post seo company in dubai

nice post seo company in dubai

Many thanks for providing this information.

Data Science Training in Noida

Data Science Training institute in Noida

Hi! This is my first comment here so I just wanted to give a quick shout out and say I genuinely enjoy reading your blog posts. Can you recommend any other Fashion Write For Us blogs that go over the same topics? Thanks a ton!

I feel really happy to have seen your webpage.I am feeling grateful to read this.you gave a nice information for us.please updating more stuff content...keep up!!

python training in bangalore

python training in hyderabad

python online training

python training

python flask training

python flask online training

python training in coimbatore

python training in chennai

python course in chennai

python online training in chennai

I just got to this amazing site not long ago. I was actually captured with the piece of resources you have got here. Big thumbs up for making such wonderful blog page!

Salesforce Training in Chennai

Salesforce Online Training in Chennai

Salesforce Training in Bangalore

Salesforce Training in Hyderabad

Salesforce training in ameerpet

Salesforce Training in Pune

Salesforce Online Training

Salesforce Training

Thank you for what you have shared about google. Actually I really want to learn about google but do not know how? Máy ép dầu thực vật Nanifood, Máy ép tinh dầu Nanifood, Máy ép dầu Nanifood, Máy lọc dầu Nanifood, Máy ép dầu, May ep dau, Máy lọc dầu, Máy ép tinh dầu, Máy ép dầu thực vật, Máy ép dầu gia đình, Máy ép dầu kinh doanh, Bán máy ép dầu thực vật, Giá máy ép dầu,..........................

Post a Comment