In 1993, R.Holte noted that "Very simple classification rules perform well on most commonly used datasets". His very simple rules are basically binary classifiers that make decision based on value of a single attribute.

In the race for latest and greatest classifiers it's easy to forget that many kinds of classification tasks in 1993 still come up today, so conclusions of Holte's paper still apply.

I came across this effect couple of years ago on a set of document categorization experiments. Folks at WinnowTag wanted me to look into various methods for automatically tagging news/blog items. The idea was that a user manually tags some articles, the system learns and gradually takes over the role of tagging. You can approach it as a straight-forward binary classification task since number of errors represents the number of tag additions/deletions needed from user to fix the tagging.

At first I thought about jumping in and coding some latest and greatest approach for multi-tag labeling like one in from NIPS'04 paper, but as a sanity check, I decided to try existing Weka's classifiers on the dataset.

Nice thing about Weka is that it provides a unified command line interface to all of its classifiers, so you could make a simple Python script like this to run 64 different classifiers on your classification task with default parameters provided by Weka.

4000 hours of EC2 runs later, I got the results. Simplest classifiers like Naive Bayes, OneR (from Holt's paper) made the smallest number of errors. Weka's BayesNet, J48, VotedPerceptron, LibSVM performed as bad or worse as predicting "False" for every tag. Logistic Regression, Neural Networks, AdaboostM1 failed to finish after a day of training.

In retrospect, it makes sense -- in the original dataset there were 35 tags and 5-40 documents per tag, sometimes as small as a couple of sentences, and with the amount of inherent noise in tagging, that's simply not enough data to identify some of the deeper patterns that higher complexity classifiers are made for. In this settings, I wouldn't expect "advanced" classifiers to give a significant improvement over Naive Bayes even after significant tuning of hyper-parameters

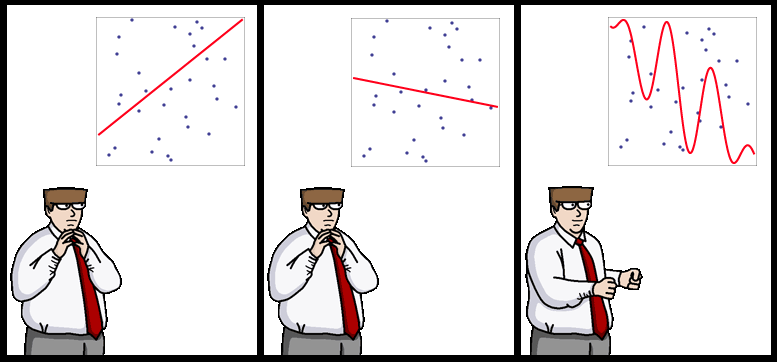

It's been suggested that more data beats better algorithms, but here, I would say that when you have little data/prior knowledge, simplest learners work as well as the most advanced ones.

PS: $1 for the caption to the strip above

9 comments:

Yaroslav,

I have been fascinated by this example for a while now.

I wish that one day a robust explanation will eventually bubble up for this behavior. Do you think that it could somehow be connected cleanly to the Donoho-Tanner Phase transition ( http://goo.gl/CYAs check the first paper for a clear explanation of what it is ) ?

Cheers,

Igor.

Hm....I don't immediately see a phase transition phenomenon. I expect as the size of the dataset is increased, complex classifiers will gradually bubble to the top. If you connect it kind of problems seen in Donoho's paper, the analogy would be -- take some high dimensional problem, fix dimension+noise, and plot reconstruction error as the function of number of samples. Do you you expect the function to be mostly smooth, or have a jump?

Hi,

as you already noted, the choice of parameters is important, and one cannot draw such conclusions from the experiment that was run without a proper cross-validation. Also, AdaBoost could have converged if you required less iterations. Though, I don't argue with the conclusion itself.

I've never worked with NLP data but it seems your documents had some underlying structure. May be it could have been exploited to recognize more complicated patterns is spite of the small size of the dataset, couldn't it?

As for the strip title, try "the overweighted overfits." =)

The problem with small sample size is that if you don't severely restrict the complexity of allowable patterns, it's going to pick up spurious interactions due to noise.

Not sure about structure, but the idea I had was to have some principled way of incorporating prior knowledge into learning. For instance, have the machine learn a model and then have the user look at it and "edit out" spurious interactions. This would need a model where weights/components have a common sense interpretation so AdaBoost/SVM's are out.

I think the simple message of this post is "beware of overfitting".

THANKS FOR THE INFORMATION...

<a href="http://www.chloros.in/digital-marketing-internship.htmlhttp://www.chloros.in/digital-marketing-internship.html>Digital Marketing Internship Program in Bangalore</a>

Excellent machine learning blog,thanks for sharing...

Seo Internship in Bangalore

Smo Internship in Bangalore

Digital Marketing Internship Program in Bangalore

Kudos to the brilliant minds shaping our future! Scientists, the unsung heroes of progress, tirelessly unravel the mysteries of the universe, pushing the boundaries of knowledge and innovation. Their dedication illuminates the path to a brighter, more sustainable world. Gratitude to these modern-day pioneers for their invaluable contributions to humanity's journey forward!

It took me a long time to lose the extra weight and I tried different options. I believe that weight should be lost gradually and correctly in order not to gain it back. I found an interesting article on this https://valhallavitality.com/blog/discover-the-benefits-and-side-effects-of-mic-b12-injections-for-your-health-and-wellbeing site about mic b12 injections. After all, this injection helps to improve metabolism and it is an excellent assistant for those who want to lose weight. Be sure to read on the site information about side effects, because it is important to know about it if you decide to try injections. Personally, they have helped me get in shape

Post a Comment