notebook

It's a demonstration of Expectation-Maximization algorithm, you need Mathematica or free Mathematica Player to run it.

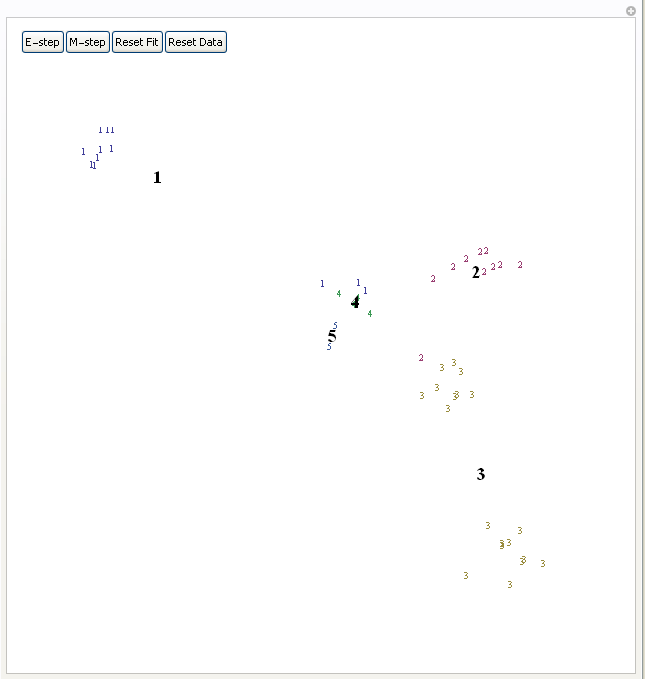

Expectation-Maximization algorithm tries to find centers of clusters in the data. It first assigns each point to some random cluster, each cluster center to random position on the plane, then iterates E and M steps. E-step updates cluster center to be the mean of all datapoints assigned to that cluster, M-step updates each datapoint to be assigned to the cluster whose center is closest to this datapoint. Bold numbers indicate current cluster centers, small numbers indicate datapoints and their current cluster assignment. You can view it as a method of finding approximate maximum likelihood fit of a Gaussian mixture with fixed number of Gaussians and identity covariance matrix. Press "E-step" and "M-step" in alternation to see the algorithm in action.

4 comments:

I have tried, but it didn't work under my Mathematica 8.

Great information, you described it well. Excellent work! Nice tips.

Find deals with hotels

Excellent machine learning blog,thanks for sharing...

Seo Internship in Bangalore

Smo Internship in Bangalore

Digital Marketing Internship Program in Bangalore

What is the Difference Between All

Alphanumeric Characters

MS in USA

Study Search

how to open Bank account online for free

How to get job in Product Based Companies, Google Facebook, Apple, Netflix, Amazon

Post a Comment